Every evening, our backyard camera captured dozens of motion events - mostly wind, insects, or shadows. But hidden in the noise were possums. What if we could teach a machine to see, filter noise, and tell us only when it matters? This project explores my journey from raw IR footage to a robust, real-time possum detector.

Those late-night visits were not just interesting observations - they posed a real risk. Possums regularly enter the backyard, inevitably attracting the attention - and hunting instincts - of our dog, Beau. To prevent potentially dangerous encounters, the long-term vision was a smart dog-door mechanism that automatically closes whenever a possum is detected, keeping wildlife safe and Beau indoors. Before integrating anything into the door, however, we started with a simpler, controlled prototype: a small box with a carrot inside. This setup allowed us to reliably attract possums to a fixed location, making detection easier to test, measure, and iterate.

At the same time, the project evolved beyond detection. The data began to reveal patterns - recurring visit times, frequency trends, behavioral consistency. Understanding these patterns became just as compelling as solving the detection problem itself.

Technically, the challenge is significant. Night-time infrared footage is inherently noisy: insects, wind-driven vegetation, small animals, and sensor artifacts can all resemble meaningful motion. The task was clear - build a system that reliably distinguishes possums from environmental noise in real time, while minimizing false alarms.

Infrared footage at night is chaotic. Insects pass close to the lens, wind moves vegetation, rain reflects light, and small animals trigger motion events. Many of these look convincingly similar to a possum at ROI level.

Possums don't behave like clean training data. They freeze for minutes, hide in tall grass, disappear behind fences, and suddenly reappear. A single visit can easily fragment into multiple detections — or be missed entirely if motion is too subtle.

Consecutive frames are nearly identical. Without careful aggregation logic, the system either floods alerts or splits one event into many. Defining what constitutes a "visit" required more than just classification — it required temporal reasoning.

True possum appearances are rare. Most motion events are noise. Crops can be blurred, partially occluded, or poorly illuminated. Constructing a representative dataset — especially high-quality negative samples — is both critical and labor-intensive.

The system runs continuously. Thousands of ROIs must be filtered efficiently without overwhelming the CNN. At the same time, video must be recorded smoothly, events consolidated correctly, and the pipeline optimized for potential edge deployment.

total images

train

validation

test

Aug 2025 - Feb 2026

Backyard night camera

Motion detection ROI extraction, manually reviewed & labeled

ROIs from the same night session were kept together in either train or test sets to prevent temporal data leakage.

Motion-blurred possum images were kept in training to reflect realistic night conditions.

Each motion trigger generates a cropped Region of Interest (ROI). Some are clean and obvious. Others are ambiguous, blurred, or deceptively similar to a possum. Below are representative examples.

.jpg&w=3840&q=75)

.jpg&w=3840&q=75)

.jpg&w=3840&q=75)

.jpg&w=3840&q=75)

.jpg&w=3840&q=75)

.jpg&w=3840&q=75)

.jpg&w=3840&q=75)

.jpg&w=3840&q=75)

The model must learn to:

In practice, the quality and diversity of ROIs had a greater impact on system reliability than minor architectural changes to the CNN.

The goal was not just high classification accuracy, but reliable real-time detection in a noisy outdoor environment.

The system combines lightweight motion detection with a fine-tuned CNN. Motion detection extracts candidate ROIs, dramatically reducing computational load, while the CNN verifies each crop for final classification.

At its core is a pretrained ResNet18, fine-tuned to adapt from standard ImageNet features to infrared night imagery — a domain with distinct texture patterns, contrast behavior, and noise characteristics.

To ensure stability in real time, detection is confirmed only if a possum appears in 3 out of 5 consecutive frames, reducing flicker and isolated false positives.

The model was trained for 8 epochs using transfer learning with ResNet18, achieving a test accuracy of 99.30% with ROC AUC of 99.92%, demonstrating strong generalization with minimal overfitting.

54.9%

0.0%

0.7%

44.4%

A possum is considered detected only if it appears in at least 3 out of the last 5 processed frames. This sliding window approach:

Reduces single-frame misclassifications

Stabilizes predictions in noisy night conditions

Ensures robust detection when possums move slowly or remain stationary

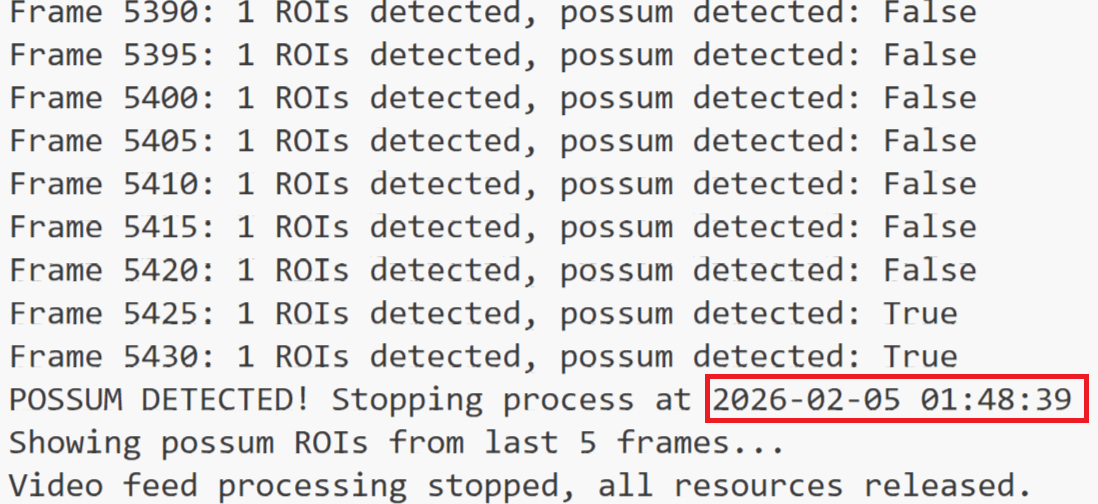

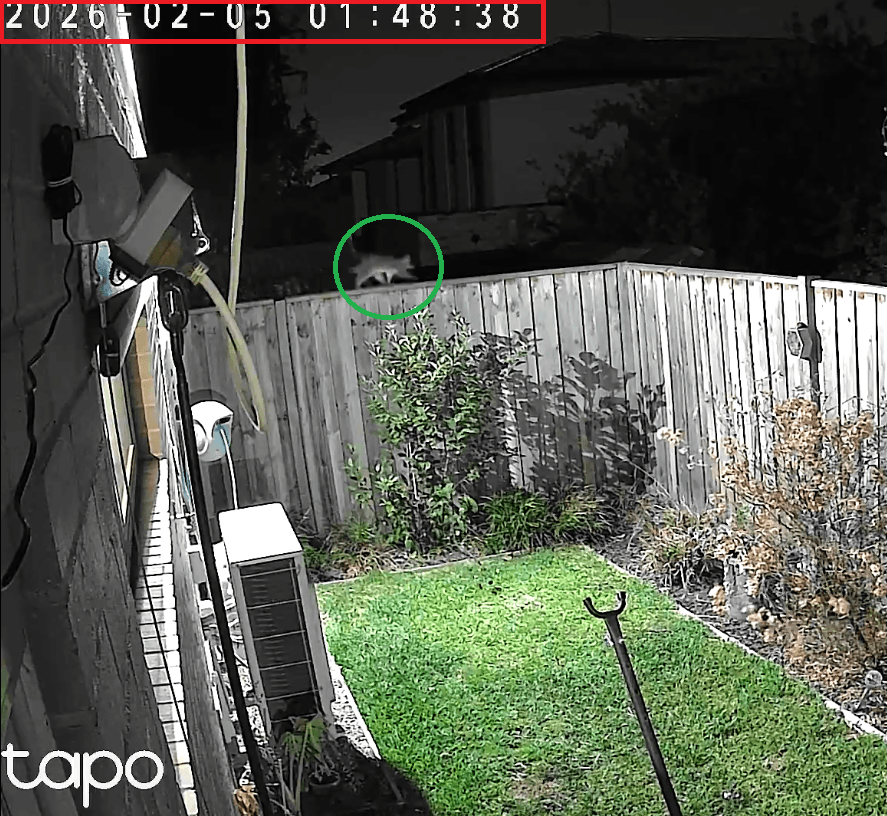

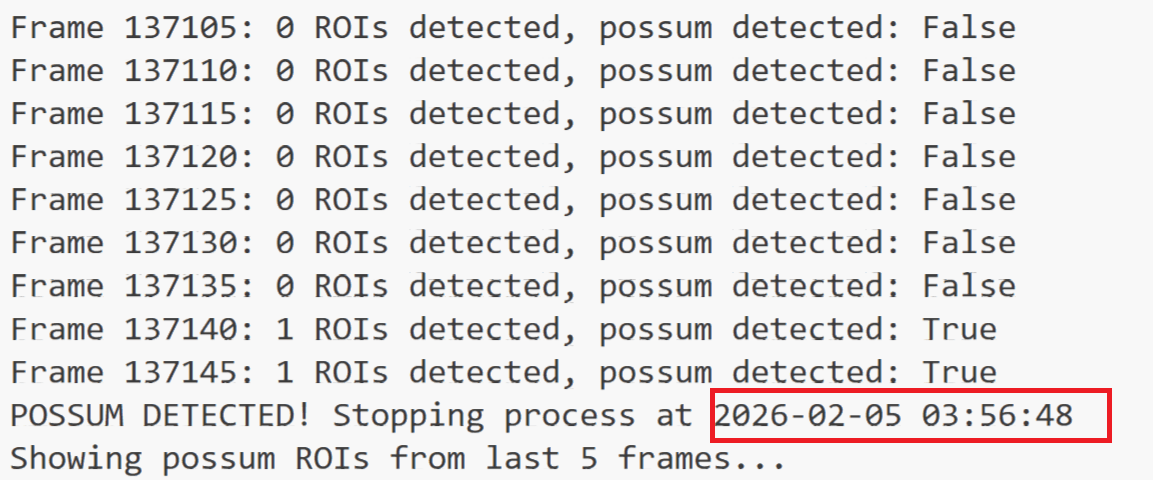

Real-time examples captured during live camera inference. The system reports a possum detection only after satisfying the temporal consistency rule.

A sample recording from the backyard night camera, showing the type of footage the detection system processes in real time.

Performance may degrade under extreme weather conditions such as heavy rain, strong wind, or excessive infrared noise. Severe visual distortion affects both motion detection and ROI quality.

The pipeline relies on motion as a first-stage trigger. Completely static possums may be temporarily missed until movement resumes.

Possum appearances are relatively rare, limiting the variability of training examples. Unusual poses, lighting conditions, or rare behavioral patterns may be underrepresented.

Low-resolution cameras, distant subjects, or suboptimal angles reduce crop clarity and can impact classification confidence.

The system is trained on data collected in this specific backyard environment. Changes in background structure, vegetation, camera placement, or lighting conditions may require additional data collection and retraining to maintain performance in a new setting.

As with most supervised systems, previously unseen objects or rare edge cases may occasionally be misclassified.

All videos and ROI images are stored in Google Cloud Storage. Secure signed URLs are generated dynamically for temporary browser access.

Structured visit metadata (timestamps, duration, representative ROI, approval status) is stored in Google Cloud SQL (MySQL). Media and metadata are intentionally separated for scalability and clean architecture.

/visits — visit statistics by date range

/videos_rois — per-night visits with signed media URLs

/recent_activity — latest detections

/statistics/dashboard — aggregated behavioral analytics

When a new video is uploaded, Cloud Storage triggers a Cloud Run service. The video is converted to browser-compatible format (H.264, faststart). Metadata prevents reprocessing. This guarantees smooth playback directly in the web interface.

A scheduled Cloud Run job merges fragmented detections into single visits using database locks to prevent concurrency conflicts.

While the backyard detector reveals when possums visit our garden, it raises a broader ecological question — how common are possum sightings across Australia?

To explore this, I integrated large-scale biodiversity observation data from the Atlas of Living Australia. Approximately 200,000 occurrence records (2020–present) for four possum species were imported and stored in a MySQL database.

Observations are aggregated using hexagonal spatial grids to visualize sighting density across Australia. The data pipeline automatically retrieves new records and stores them in a structured spatial database for fast querying.

Precise monitoring in a single backyard — visit timing, duration, frequency, and individual possum identification via CNN detection.

National patterns of possum observations across Australia — species distribution, regional density, and temporal trends from 120M+ ALA records.

01

Extend detection beyond analytics by connecting it to physical devices — such as automated feeding mechanisms or intelligent dog-door locks — creating a closed-loop safety system.

02

Experiment with training a CNN from scratch to compare against the fine-tuned ResNet18 baseline and better understand domain-specific feature learning in infrared imagery.

03

Develop a richer web dashboard to visualize long-term behavior patterns, seasonal trends, visit frequency, movement heatmaps, and activity timing.

04

Optimize the pipeline for lightweight edge devices, enabling fully local, low-latency detection without reliance on cloud infrastructure.